Chapter 17: Virtual Environments

17.3 Hypermedia and Art

In 1978 Andy Lippman and group of researchers from MIT (including Michael Naimark and Scott Fisher) developed what is probably the first true hypermedia system. The Aspen Movie Map was what they termed “a surrogate travel application” that allowed the user to enjoy a simulated ride through the city of Aspen, Colorado.

The system used a set of videodisks containing photographs of all the streets of Aspen. Recording was done by means of four cameras, each pointing in a different direction, and mounted on a truck. Photo’s were taken every 3 meters. The user could always continue straight ahead, back up, move left or right.

Each photo was linked to the other relevant photos for supporting these movements. In theory the system could display 30 images per second, simulating a speed of 200 mph (330 km/h). The system was artificially slowed down to at most 10 images per second, or 68 mph (110 km/h).

Movie 17.1 Aspen Map example

To make the demo more lively, the user could stop in front of some of the major buildings of Aspen and walk inside. Many buildings had also been filmed inside for the videodisk. The system used two screens, a vertical one for the video and a horizontal one that showed the street map of Aspen. The user could point to a spot on the map and jump directly to it instead of finding her way through the city.

Working on human-computer interaction at the University of Wisconsin in the late 1960s and early 1970s, Myron Krueger experimented and developed several computer art projects.

After several other experiments, VIDEOPLACE was created. The computer had control over the relationship between the participant’s image and the objects in the graphic scene, and it could coordinate the movement of a graphic object with the actions of the participant. While gravity affected the physical body, it didn’t control or confine the image which could float, if needed. A series of simulations could be programmed based on any action. VIDEOPLACE offered over 50 compositions and interactions (including Critter, Individual Medley, Fractal, Finger Painting, Digital Drawing, Body Surfacing, Replay, and others).

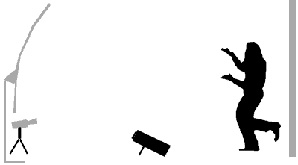

In the installation, the participant faced a video-projection screen while the screen behind him was backlit to produce high contrast images for the camera (in front of the projection screen), allowing the computer to distinguish the participant from the background.

The participant’s image was then digitized to create silhouettes which were analyzed by specialized processors. The processors could analyze the image’s posture, rate of movement, and its relationship to other graphic objects in the system. They could then react to the movement of the participant and create a series of responses, either visual or auditory reactions. Two or more environments could also be linked.

In 1983 Krueger published his now-famous book Artificial Reality, which was updated in 1990.